Helping people with anorexia understand hunger again.

We designed a recovery companion app and wearable that translates invisible biosignals into data visualisations. Giving people with anorexia nervosa a way to see what their body is doing, when their internal sense of hunger and fullness is unreliable.

Tools

Figma/ Figma make

Claude

Chat GPT

Team

2 Product Designers

Contribution

Research - Led the interoception research, helped source and synthesize the three papers that grounded the entire design direction. Researched the landscape of biosignal monitoring technology to determine which signals were most relevant and feasible.

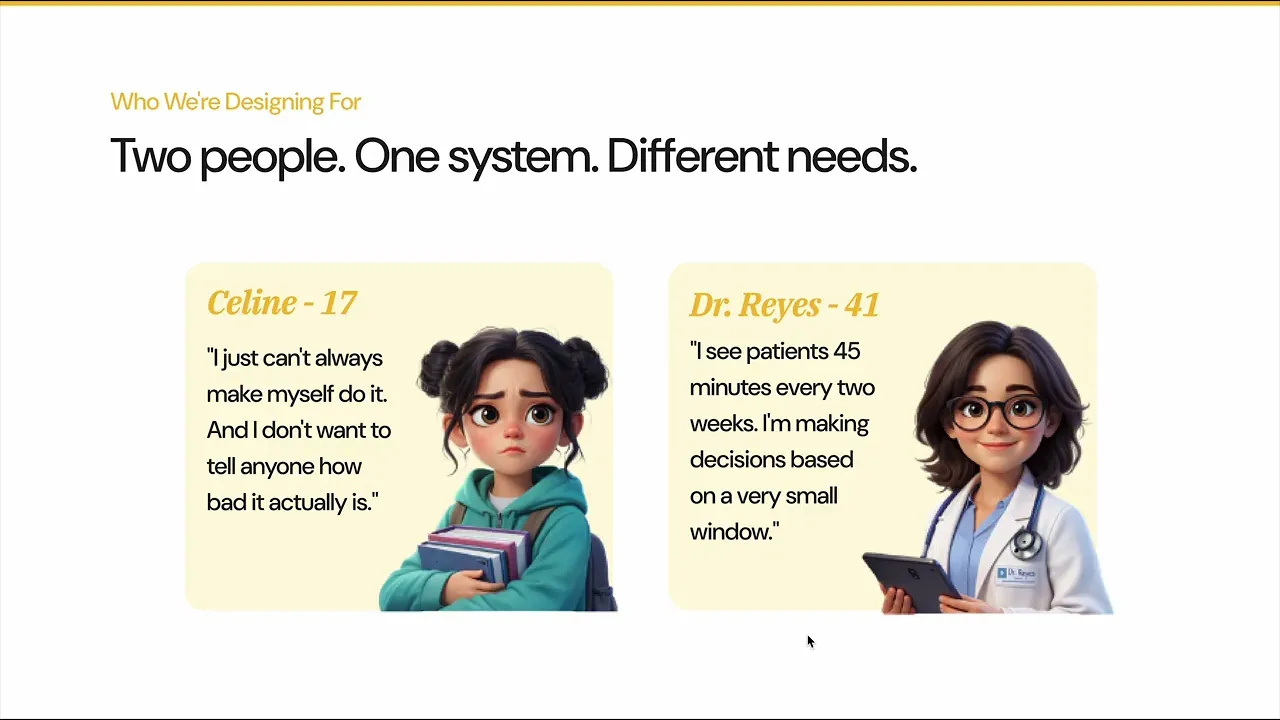

Personas - Co-developed both Celine and Dr. Reyes with my partner, contributing to the background narratives, pain points, and goals.

Concept & ideation - Worked with my partner to finalise the core concept, the biosignal-to-flower system and the design principles that held the whole system together.

Design & build - Designed and built my assigned screens in Figma Make, contributing to the overall visual system and working with my partner to maintain design coherence across all screens.

Presentation - Wrote the presentation script, use case narratives, elevator pitch, and Devpost write-up. Co-delivered the final presentation with my partner.

00 OVERVIEW

About

FigBuild is Figma’s annual student design-a-thon, where university students from around the world come together over a short, intensive period to design and build original product concepts using Figma.

Unlike traditional hackathons that prioritize speed or technical output, FigBuild emphasizes thoughtful problem framing, strong user experience design, and the ability to clearly communicate a product’s concept, interaction, and impact through storytelling and prototyping.

The Challenge

The FigBuild prompt challenged teams to explore and design for human senses beyond the traditional five (sight, sound, touch, taste, and smell), focusing on how technology might better support or interpret internal bodily experiences.

Participants were asked to identify an under-explored sense, understand its role in everyday life, and imagine a speculative technological intervention that could make that sense more perceivable, interpretable, or actionable.

The Solution

Designed for people recovering from anorexia nervosa, Attune makes hidden body signals visible so patients can recognize hunger when their cues feel absent or confusing. It translates clinical biosignals into an intuitive visual system, helping users eat with more confidence and rebuild trust in their body during recovery.

Visualizing hunger as a feeling, not a number

Gradual access to clinical data over recovery

Sharing progress with your support system

02 USER JOURNEY

A day in Celine's Life

The most powerful way to understand what Attune does is to follow one person through three real moments. The kind where the difficulty is invisible, and the system makes it visible.

USE CASE 1

Understanding what her body is saying

THE SCENARIO

Celine sits in the school cafeteria. Everyone around her is eating, but she feels nothing no hunger, no fullness, just static. She's not choosing to ignore her body. She genuinely can't hear it.

WHAT HER BODY IS BROADCASTING

"I'm rising. That restless feeling has a name."- Ghrelin

"I'm a little low. The fog makes sense right now." - Glucose

"I'm stirring. That unsettled feeling is just movement." - Motility

"I'm a little high. That tension you're carrying is real." - Cortisol

ATTUNE

Celine glances at her phone and sees her home screen come to life, bees begin to buzz around the ghrelin flower. This signals that her body is experiencing hunger. Data from the wearable patch is transmitted to the app, and as ghrelin levels rise, the bees activate, visually indicating her body’s need for nourishment.

ATTUNE

She opens the app and notices that her ghrelin levels are rising. A chart shows how her hunger has fluctuated over time, highlighting recent dips and peaks. She then moves to her garden view, where each flower represents a different hormone tracked by the patch. Some flowers appear translucent, indicating they are out of range. She taps on them to learn what each signal does in her body, helping her better understand what she’s feeling and why.

THE OUTCOME

For the first time, Celine doesn't have to guess what her body is doing. The garden does the sensing for her translating invisible biomarkers into something she can see, understand, and respond to.

USE CASE 2

Accessing her full data story

THE SCENARIO

After months of treatment, Celine is making progress but she feels like she's flying blind. She knows her doctor checks her data. She knows patterns are being tracked. But she has no visibility into her own body's story. The data exists. She just can't see it.

That gap feels infantilising, and it quietly erodes her trust in her own recovery.

WHAT HER BODY IS BROADCASTING

Ghrelin levels - tracked across 7 days

Cortisol spikes - correlated with skipped meals

What her doctor sees

Her doctor sees all the metrics collected from her patch, it highlights all the trends, it also gives the Dr space to make notes and conduct data analysis.

ATTUNE

After a strong session with Dr. Reyes, Celine is granted full access to her analytical data through the Grow screen. She can now skim each biomarker with a plain-language summary so she's never overwhelmed. She's not just a patient anymore. She's a participant in her own care.

THE OUTCOME

Celine scrolls through a week of data and spots something herself her stress levels spike every Sunday night, right before school. She brings it to her next session. Dr. Reyes didn't catch it. Celine did. The data gave her agency, and agency is what recovery is built on.

USE CASE 3

Showing progress in a meaningful way

THE SCENARIO

Recovery is invisible work. Celine shows up every day she checks in, she eats, she tries but there's no proof of it. No artifact. No record of the small victories that nobody sees.

WHAT HER BODY IS BROADCASTING

"I'm quiet today. Your body is holding more than usual." - HRV

"I'm warm. You've been taking care of yourself." - Temperature

"I'm resting. Something steadied today." - Cortisol

ATTUNE

Every day that Celine engages with her body through Attune, she grows flowers. Each flower is earned, a physical representation of the work she's putting in. On the Gather screen, she can arrange those flowers into bouquets. She can save them for herself as a private collection of proof, I did this or she can gift a bouquet to someone she loves, paired with a personal message.

THE OUTCOME

On her three-month mark, Celine sends her mom a bouquet she's been quietly building for weeks. Her mom receives it and feels an overwhelming sense of pride. No clinical report. No awkward check-in conversation. Just flowers, and everything they mean. Celine didn't have to find the words. Attune found them for her.

01 RESEARCH

Understanding the Premise

Given the time constraints, we relied on secondary research and academic literature to build a foundational understanding of our users and identify key problem spaces. Among these, one area of research stood out as particularly influential in shaping our direction:

Gap in the Treatment

For someone in treatment for an eating disorder, a clinician appointment happens roughly once a week. That leaves 167 hours every week where the patient is alone with a body they do not trust, reading signals they cannot accurately interpret, and making decisions based on perceptions that are neurologically compromised.

How do you measure and display an interoceptive sense in a way that feels safe, not clinical for someone whose interoceptive system is unreliable?

Exploring the interoception landscape

We mapped the full landscape of what could theoretically be measured from CGMs (Continuous Glucose Monitors).

We identified a major technological gap: no wearable currently measures hunger hormones like ghrelin and leptin passively.

Inner Garden's IBM (Interoceptive Biomarker Monitor) patch is speculative but grounded in the direction the technology is heading. CGMs like Dexcom G7 and Abbott FreeStyle Libre were referenced as real precedents for passive biosignal monitoring. The SenseSupport system, which uses CGM meal-detection to deliver just-in-time adaptive interventions, directly validated our approach.

The eight signals we chose to display

Who are we building for

We did not want to replace therapy so we focused on creating this product for those who are seeking professional help. So we decided to make 2 personas one for the patient and 1 for the doctor.

Core Design Principles

We chose a more visual, playful direction intentionally we didn’t want the app to feel clinical. Since it translates internal signals into feelings, we designed it to be expressive and metaphorical, not medical.

01

Witness Not Instruct

The body voice names current state without directing action. "I'm rising" not "you should eat." Instructive language in this context is experienced as accusation.

02

Felt Language Only

No numbers, percentages, ranges, or clinical terms on the user-facing layer ever.

03

Contextual Desired State

No signal is simply "high is good." Ghrelin rising and unanswered is concerning. Ghrelin rising after a meal would be different. The garden reads context.

04

No Pass/Fail

Red and green indicators, all removed. All data presentation must be positional and observational, not evaluative.

05

Flowers As Weather Vanes

The garden mirrors what the body is feeling. It does not evaluate or judge. It shows the wind direction what you do with that information is always yours.

03

Prescribed Not Downloaded

Attune sits alongside clinical treatment not instead of it. It is a perceptual prosthetic. The clinician is still present, still necessary, still the expert.

Our Final Submission

Check out more of my work

2025 • B2C • Recruitment Tool

Hack4Impact Recruitment Portal

Transformed Hack4Impact’s recruitment system into a single, transparent platform that supports applicants, reviewers, and directors at scale.